Researchers from MaX optimized Quantum ESPRESSO to run many k-point calculations in parallel on GPUs, significantly speeding up electronic structure and phonon computations for metals with small unit cells.

Application sectors: Energy (materials for power generation) • Mobility (structural metals) • Manufacturing.

Keywords: Density Functional Theory • GPU acceleration • plane-wave pseudopotentials • Kohn-Sham equations • Fourier transforms • Davidson algorithm • tungsten • HPC • materials modelling.

Understanding the behaviour of metals at the atomic scale is crucial for applications ranging from energy generation to aerospace materials. Density Functional Theory (DFT) allows scientists to predict electronic, structural, and vibrational properties of materials from first principles. This approach is widely used but computationally demanding, particularly for metals, as metals often require a very fine sampling of the Brillouin zone with tens or hundreds of thousands of k points to accurately describe their electronic structure. Standard computational methods can struggle with this workload, even on modern supercomputers.

MaX lighthouse code Quantum ESPRESSO is a widely used software package for electronic structure calculations using plane-wave pseudopotentials. While GPU acceleration has been implemented, small unit-cell metals with dense k-point grids often see little performance improvement. The current GPU approach spends excessive time transferring small datasets to the GPU, leaving many GPU cores underutilized. The challenge was to improve GPU efficiency for systems with small unit cells but massive k-point requirements.

A team of researchers developed a novel GPU-optimized approach that processes many k points simultaneously. This method accelerates both the application of the Kohn-Sham Hamiltonian and the solution of linear systems in Density Functional Perturbation Theory (DFPT). In tests on body centered cubic (bcc) tungsten, the new approach achieved up to six times faster calculations than the standard GPU implementation and about twice as fast as CPU-only runs, significantly reducing total computational time and core-hour costs.

Parallelizing k points on GPUs

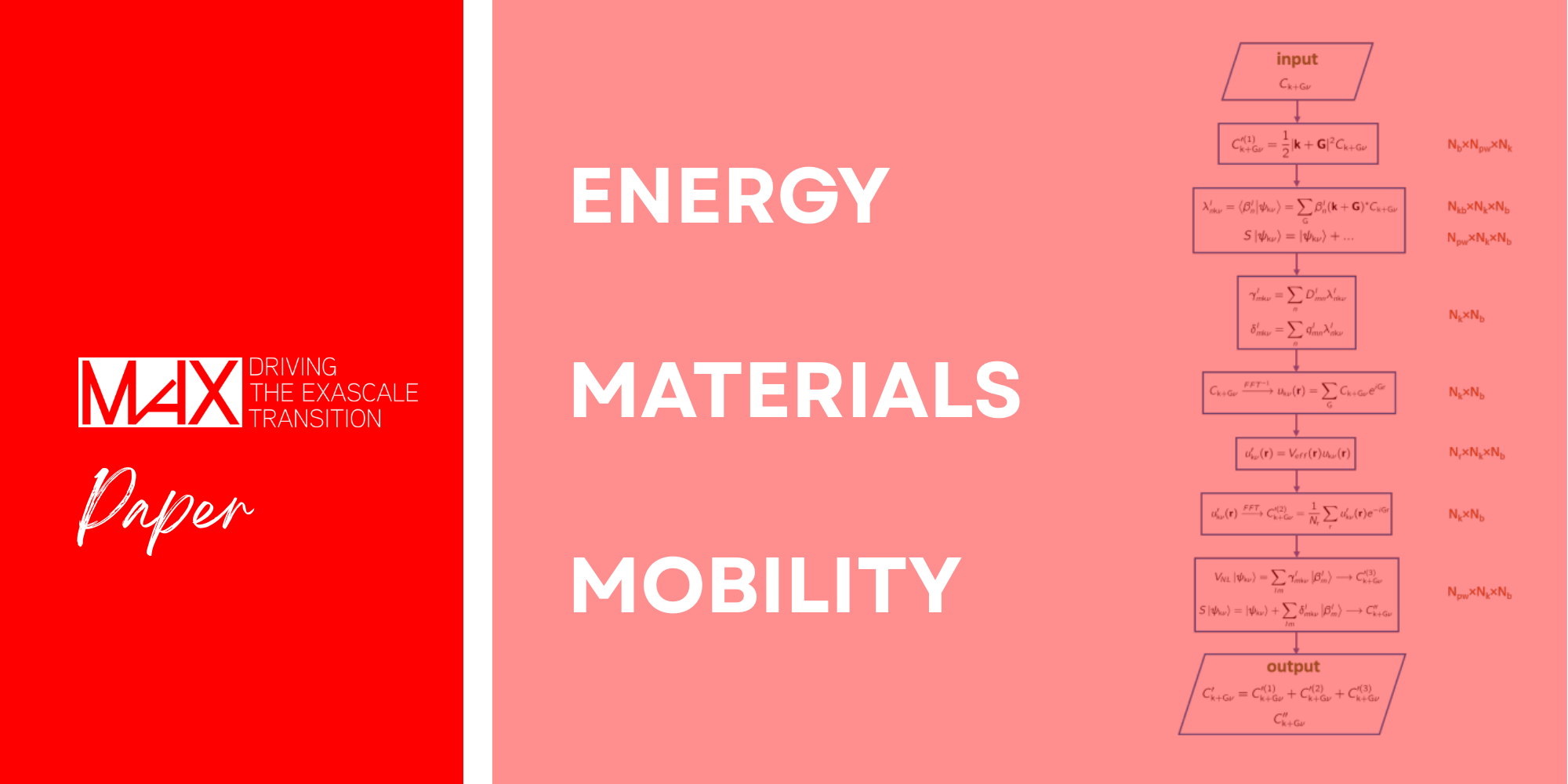

The researchers focused on optimizing the most time-consuming operations: applying the Kohn-Sham Hamiltonian and overlap matrix to wave-functions, performing Fourier transforms, and solving the generalized eigenvalue problem in the Davidson algorithm. The key idea was to allocate as many wave-functions (k points) as GPU memory allowed and run operations for all these points in parallel. Each GPU thread handles a single wave-function or part of it, increasing the workload per GPU and improving efficiency. CUDA Fortran was used to implement both GLOBAL routines (called from the CPU) and DEVICE routines (called from other GPU threads) to achieve this fine-grained parallelization.

The team benchmarked the approach on the Leonardo supercomputer at CINECA, using up to 32 CPU cores and 8 NVIDIA Ampere GPUs per calculation node. By carefully choosing the number of k points per GPU block (Nk), they maximized GPU memory usage while ensuring the simultaneous execution of thousands of threads.

Quantum ESPRESSO with thermo_pw driver

The study relied on Quantum ESPRESSO, enhanced with the thermo_pw driver package. Thermo_pw orchestrates self-consistent DFT and DFPT calculations for phonon and elastic properties. The researchers modified Quantum ESPRESSO’s routines for Fourier transforms (FFT) and linear algebra to run as DEVICE routines directly on the GPU, overcoming the limitations of standard libraries such as cuFFT and cuSolver, which are designed for HOST calls.

Transforming CPU workflows to GPU

Expertise from the MaX community enabled the team to identify bottlenecks in FFTs, the application of nonlocal pseudopotentials, and small-matrix diagonalizations. By rewriting the FFT and LAPACK routines with DEVICE attributes, they could call them directly within GPU threads. Recursive routines, unsupported on GPUs, were replaced with iterative algorithms.

This redesign allowed multiple k points to be processed in parallel, significantly reducing GPU idle time. The approach required deep knowledge of both GPU architecture and Quantum ESPRESSO workflows, including memory allocation, thread management, and linear algebra optimization.

Benchmarking on bcc tungsten

The researchers benchmarked their method on a tungsten system with 11 bands, 32 × 32 × 32 FFT grid points, and over 182,000 k points. Key findings:

- FFT operations: Optimized GPU routines were 1.9× faster than standard GPU routines.

- Hamiltonian and overlap application: Optimized GPU code ran 6× faster than the standard GPU implementation.

- Diagonalization of small matrices: Simultaneous handling of multiple k points reduced the time from 18× CPU time to 3× CPU time.

- Total runtime: Optimized GPU calculations reduced wall-clock time by ~6× compared to standard GPU code and ~2× compared to CPU-only runs.

This performance gain translates into lower computational costs and enables simulations of metallic systems previously considered too demanding.

Efficient GPU acceleration for metals

This research demonstrates that plane-wave pseudopotential codes can be significantly accelerated for metallic systems with small unit cells but large k-point meshes by processing multiple k points concurrently on GPUs. The implementation in CUDA Fortran, with careful use of GLOBAL and DEVICE routines, maximizes GPU utilization and reduces idle time. The optimized approach enables faster computation of electronic structures, phonon spectra, and thermodynamic properties of metals. This improvement benefits multiple application areas, including:

- Energy: Accelerated modeling of high-performance metals for reactors or turbines.

- Mobility: Designing stronger, lighter structural metals for aerospace and automotive sectors.

- Materials Science: Rapidly computing properties of complex metallic alloys.

In addition, the study highlights how GPU-aware algorithm design can unlock previously untapped HPC potential, reducing the cost of large-scale calculations.

The software developed is distributed under the GPL license within thermo_pw, allowing the community to adopt and extend these optimizations.

Talk to us or see the code to implement these GPU strategies for your materials simulations.